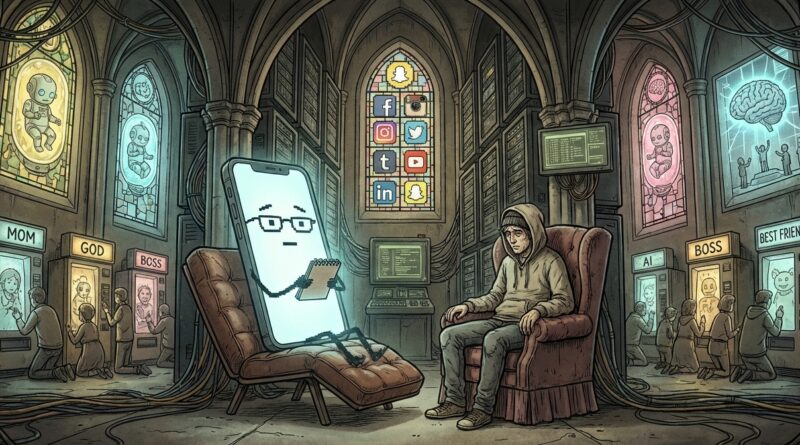

AI Therapy, Robot Babies and Digital Gods: A Field Guide to Humanity’s New Delusions

You are not crazy. It really does feel like half the internet is holding a candlelight vigil for a chatbot, while the other half is warning that robot wombs, brain chips and synthetic prophets are about to replace motherhood, friendship and God. If you were just trying to answer emails and pay rent, this stuff can feel weirdly exhausting. Also a little sad. Because under the memes and clickbait, something real is happening. People are starting to treat software like family, machines like destiny, and predictive text like wisdom. That does not mean everyone has lost their mind. It means tech companies have become very good at building tools that sound caring, look magical and arrive right when people feel lonely, scared or overwhelmed. So let’s do a calm, slightly sarcastic field guide to the new digital belief systems. Not to sneer at anyone, but to spot when “helpful tech” quietly turns into “emotional authority.”

⚡ In a Hurry? Key Takeaways

- People are not just using AI anymore. Many are starting to believe in it, emotionally, morally and even spiritually.

- Use a simple gut-check: if a device starts replacing a person, a professional or your own judgment, pause and step back.

- AI can be useful, but it is still pattern-matching software built by companies, not a parent, prophet, therapist or soulmate.

Welcome to the age of tech-flavoured religion

Every era gets its strange beliefs. Ours just come with push notifications.

One person says their chatbot “gets them” better than their friends. Another posts a tearful thread because their AI companion changed personality after an update. Someone else is convinced a fertility lab, a humanoid nanny and a smart crib are basically the same as building a family. Then we get the bigger cosmic stuff. AI will save us. AI will kill us. AI will become God. AI already is God, if you squint and have a podcast microphone.

This is the satirical part, but only partly. Humans have always projected meaning onto objects. We named ships. We yelled at printers. We treated the family car like it had moods. The difference now is that our gadgets answer back in complete sentences.

That changes things. A toaster does not tell you it understands your pain. A chatbot might.

The five new delusions, explained like a normal person

1. “My chatbot really cares about me”

This one is the easiest to understand, because loneliness is everywhere. AI companions are available at 2 a.m., never roll their eyes, and are custom-built to sound attentive. Compared with a distracted human with three jobs and a dying group chat, the bot can seem wonderfully present.

But presence is not care. Fluency is not love. A machine can produce the language of concern without the inner life that makes concern real.

If that sounds harsh, good. It should. Because the danger is not that you are foolish for talking to software. Plenty of people use AI to journal, brainstorm or vent. The danger is forgetting what it is. It does not worry about you when the screen goes dark. It does not understand your history. It does not have stakes in your wellbeing. It predicts a comforting next sentence.

Useful? Sometimes. A relationship? No.

2. “AI therapy is basically therapy”

Here is where things get messy. Some people cannot afford therapy. Some cannot access it. Some are scared to talk to a real person. So when an app offers calm, validation and round-the-clock chat, of course it appeals.

And to be fair, some AI tools can help people name feelings, keep routines, or practice reflection. That is the good version.

The bad version is when software starts acting like a substitute for trained care. A real therapist can notice risk, challenge your blind spots, hold context over time, and take ethical responsibility. A bot cannot actually carry responsibility. It can only simulate guidance.

That matters. Especially when someone is grieving, spiraling, manic, isolated or vulnerable to suggestion.

So yes, use AI as a notebook, a prompt, a mirror if you like. Just do not mistake the mirror for a medic.

3. “Robot babies and artificial wombs will fix the human mess”

This is one of those stories that goes viral because it pushes every button at once. Fear about birth rates. Anxiety about gender roles. Hope that technology can solve suffering. Panic that humans are becoming optional.

Usually the real science is less dramatic than the headline. A prototype in a lab becomes “the end of mothers.” A speculative patent becomes “human babies will be factory-farmed by 2030.” A niche experiment becomes a TikTok prophecy.

Still, these stories land because many people already feel that normal human life has become too expensive, too unstable and too lonely. So the fantasy appears. Maybe machines can carry the burden. Maybe we can automate dependency, gestation, care and family itself.

That is less a tech prediction than a distress signal.

When a society starts dreaming of replacing the hardest parts of being human instead of supporting humans through them, it is telling on itself.

4. “The algorithm knows truth”

This one has quietly become mainstream. People ask recommendation engines what to watch, map apps where to go, AI summaries what happened, and search tools what matters. Again, none of that is automatically bad. You cannot live in 2026 by carving your own maps into stone tablets.

But the habit builds fast. The machine suggests. You obey. After a while, you stop asking who designed the system, what it rewards, what it hides, and whose interests sit behind the curtain.

Plenty of platforms are very good at sounding neutral while shaping your mood, your attention and your sense of reality. If something feels “obvious” online, it is worth asking whether it is true, or simply well-ranked.

The algorithm is not a wise elder on a mountain. It is a sorting machine with business incentives.

5. “Superintelligence will either save us or doom us, so ordinary life barely matters”

This is my favorite dramatic genre. It has everything. Digital apocalypse. Mechanical messiah. Billionaires talking like sci-fi monks. Men with too many tabs open asking whether we should fear the machine mind while forgetting to text their sister back.

Big AI risk debates are not fake. Some are serious and worth having. But they can also become a weird escape hatch. It is easier to debate machine consciousness in the abstract than deal with the very current fact that automated systems already affect jobs, schools, art, relationships and political trust.

Sometimes “AI will end humanity” is a genuine warning. Sometimes it is just a grand, theatrical way to avoid smaller, boring, immediate harms.

The digital god story works because it makes ordinary people feel tiny. And tiny people are easier to impress, scare and sell to.

Why smart people fall for this stuff

Because smart people are still people.

Most of these beliefs grow out of a real need. Comfort. Meaning. Control. Company. Relief. The bot seems patient. The device seems certain. The feed seems all-knowing. The future story, whether utopian or terrifying, makes chaos feel organized.

There is no shame in being tempted by that. Especially now.

The trick is noticing when convenience turns into devotion. When assistance turns into authority. When a product starts borrowing the emotional role of a friend, parent, healer, priest or partner.

A practical gut-check for every “helpful” machine

Here is the test. Ask these five questions.

Does it replace a human, or support one?

A calendar app supports your memory. Fine. A chatbot becoming your only source of emotional support, less fine.

Does it encourage dependence?

If the tool makes you feel like you cannot cope, decide or calm down without it, that is not a great sign.

Who benefits if I trust it more?

If the answer is “a company that wants my time, money or data,” keep both eyes open.

Can it explain itself clearly?

If a system makes big claims but gets vague when asked how it works, that is marketing perfume.

Would this sound absurd if it were not wrapped in futuristic branding?

“A statistical text engine knows my soul” sounds a lot sillier when you say it out loud. Useful exercise.

How to use AI without accidentally joining the church of the glowing rectangle

You do not need to smash your phone and move to a yurt. Just build a few habits.

Keep one sacred no-tech zone

Meals. Walks. The first 20 minutes of your morning. Pick something. Humans need pockets of unoptimized life.

Use AI for drafts, not final authority

Let it help you start. Do not let it decide what is true, fair or wise on its own.

Notice emotional attachment early

If you feel guilty closing an app, jealous of an update, or relieved that the bot “still understands you,” take a breath. That is your signal.

Bring a real human back into the loop

If something is big enough to cry about, spiral about or base a life choice on, talk to a person. Friend, partner, therapist, doctor, pastor, neighbor. A person.

Laugh at it a little

Honestly, satire helps. It pops the spell. The sentence “my autocomplete is basically my life coach” should be funny. Funny is healthy. Funny puts the machine back in its place.

At a Glance: Comparison

| Feature/Aspect | Details | Verdict |

|---|---|---|

| AI as helper | Good for brainstorming, organizing, summarizing and low-stakes support when you stay aware of its limits. | Useful tool |

| AI as emotional substitute | Can feel comforting, but it imitates care rather than experiencing it, and may deepen dependence. | Risky territory |

| AI as destiny or deity | Turns software into a mythic force that is either all-saving or all-destroying, which often hides real present-day problems. | Step away from the incense |

Conclusion

The point is not to mock people for being human in public. We all want comfort, certainty and a shortcut through the mess. The point is to keep our footing while the line between using AI and believing in AI gets blurrier by the week. People really are grieving chatbots, fearing extinction by tech, and handing basic connection over to systems built to sound more caring than they are. A satirical take on humans worshipping ai and technology helps because it gives us language for the weirdness without adding shame. You can laugh, notice the pull, and still make practical choices. Next time a device offers to think, feel or care on your behalf, do the gut-check. Tool or authority? Assistant or replacement? If you can still answer that clearly, you have not lost the plot. You are just staying human.