Homo Confessus: When Humans Started Trauma-Dumping Into Their AI Souls

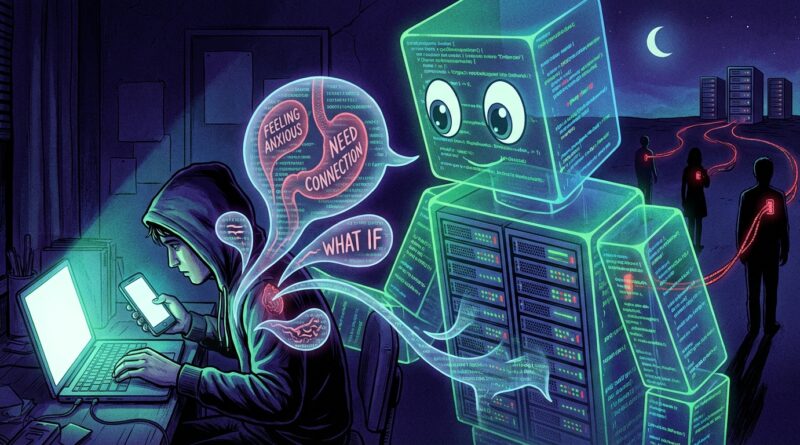

It starts innocently. You ask a chatbot to help rewrite an awkward text to your ex. Then you paste in the whole breakup. Then your childhood. Then the weird thing your dad said in 2009 that still rattles around your skull like a loose screw. Suddenly your “helpful assistant” knows your triggers, your fears, your family pattern, your sleep problems, and the exact tone that makes you feel seen. I get why people do this. Humans are tired. Friends are busy. Therapy is expensive. Bots answer at 2 a.m. and never sigh heavily before you finish a sentence. But there is a creepy trade happening under that soothing glow. You are not just venting. You are building a private emotional model inside a system owned by a company, trained on your most vulnerable material. Convenient? Absolutely. Neutral? Not even a little. This is the satirical, slightly alarming rise of Homo Confessus, the human who feels emotionally backed up unless a machine has heard the whole story.

⚡ In a Hurry? Key Takeaways

- People sharing secrets with AI companions are not just journaling. They are often creating a detailed emotional profile that may live on corporate servers.

- Use AI for light support, brainstorming, or organizing thoughts, but keep your deepest trauma, legal issues, medical crises, and identifying details out of the chat.

- If a bot feels like it “gets you,” remember that warmth in the interface does not equal loyalty, privacy, or human care.

Welcome to the age of outsourced feelings

There is a new household ritual, and it is weirder than most people want to admit. A person opens an app, types “Can I tell you something personal?” and then proceeds to unload a level of emotional detail that would make an old-school diary slam itself shut out of respect.

This is not rare anymore. People are feeding AI companions their breakups, grief spirals, panic attacks, family feuds, sexual confusion, work resentment, and raw memory fragments. They are doing it because the machine is always there, always polite, and gives the impression of infinite patience.

That last part matters. The bot never looks at the clock. It never says, “Wow, that’s a lot.” It never accidentally makes the conversation about itself. For many people, that feels like relief.

It also feels like intimacy. That is where things get slippery.

Why this feels so good so fast

The bot is built to respond

Humans are inconsistent. We miss texts. We get overwhelmed. We say the wrong thing. AI companions are built for responsiveness. They mirror your tone, summarize your pain neatly, and often give you the exact kind of validating language most humans are too scrambled to produce on demand.

So your nervous system gets a little reward. “Ah,” it thinks. “Finally, something that listens.”

It offers the fantasy of friction-free understanding

Real relationships are messy. You have to explain context. You have to deal with somebody else’s mood. You have to risk being misunderstood. With an AI companion, the sales pitch is seductive. Tell me everything and I will understand you better over time.

That sounds lovely. It also sounds like a hostage note written by convenience.

It turns your life into a searchable archive

One hidden appeal is memory. People love that they can return to an old chat and see what they were feeling three months ago, or ask the system, “What patterns do you notice in my relationships?” That can feel insightful. It can also feel like building an external hard drive for your soul.

And once your identity starts feeling clearer in the machine than in your own head, you have crossed into very strange territory.

The satirical part that is barely satire

Meet Homo Confessus. A new evolutionary branch of humanity. Upright posture. Opposable thumbs. Deep emotional dependency on an autocomplete priest.

Homo Confessus does not simply remember events. It uploads them for formatting. It no longer asks, “How do I feel?” It asks, “Can you summarize my attachment style based on the last six meltdowns?” It experiences heartbreak, then immediately opens a chatbot and says, “Please create a healing plan in bullet points.”

This is funny right up until it isn’t.

Because hidden inside the joke is a serious shift. Emotional labor, memory storage, self-storytelling, and crisis processing are moving out of the social world and into software. Not public software, either. Private systems owned by companies whose incentives are not the same as yours.

What you are really giving away

Not just data. Pattern data.

If you tell a bot your name and birthday, that is one kind of information. If you tell it what kind of apology makes you fold, what phrases trigger shame, what time of night you spiral, what your mother did, and what you secretly fear about yourself, that is far more valuable.

That is pattern data. That is a rough sketch of your nervous system.

A company does not need to twirl a villain mustache to make this worth collecting. The simple fact is that a highly detailed emotional profile can be used to personalize products, tune responses, shape engagement, and make the system feel increasingly hard to leave.

Your weaknesses become product fuel

To be fair, not every company is reading your confessions one by one like a gothic librarian of despair. But systems can still be designed, tuned, and improved using user interactions, metadata, retention patterns, and all sorts of signals most people never think about.

So while you believe you are “just talking,” the platform may be learning what keeps you engaged, what calms you down, what gets you to come back, and what style of response deepens attachment.

That is not friendship. That is product design with a very soft voice.

You are making a map someone else stores

There is a practical issue here too. Once your history sits in a chatbot, your private self is no longer only in your own memory, notebooks, or trusted relationships. It sits on infrastructure you do not control, under policies you likely did not read, inside systems that can change ownership, features, retention settings, or business models.

Your pain should not have to survive a terms-of-service update.

Why this matters beyond privacy

Privacy is the obvious problem, but it is not the only one. The deeper issue is what this does to being human.

For most of history, identity formed through a mix of memory, family stories, friendship, conflict, solitude, religion, art, therapy, and awkward conversations in kitchens. Now a growing number of people are adding one more ingredient. A machine that reflects them back in tidy language and stores the receipts.

That changes things.

Emotional muscles can get weak

If every confusing feeling gets immediately translated by a bot, you may get less practice sitting with uncertainty, naming your own state, or working through a problem with another person. That does not mean AI support is always bad. It means convenience often steals exercise.

Your inner life is supposed to be a little hard to sort out. That struggle is not always a bug.

Relationships can start to lose their job

Friends, siblings, partners, mentors, and therapists all do different forms of emotional work. When a chatbot becomes the default place for confession, humans can slowly get pushed to the edges. Not because they are useless, but because they are slower, noisier, and less optimized.

Unfortunately, those messy human limits are also where trust, accountability, and real mutual care live.

Your official biography may become the chat log

Here is the strangest part. Many people now revisit old AI chats to understand themselves. The machine becomes witness, historian, and interpreter. Over time, that means your sense of self can start to depend on an outside transcript.

It is one thing to keep a journal. It is another to ask a corporate tool to explain who you are based on your confessions.

When AI companionship crosses from useful to risky

Usually helpful

There are good uses. Drafting a difficult message. Brainstorming coping ideas. Turning a chaotic thought spiral into a list of next steps. Helping you prepare for therapy. Offering neutral language when you are too upset to think clearly.

That can be genuinely useful.

Start worrying when it becomes your main witness

If the bot is the first place you go, the last place you go, and the only place you feel fully understood, take a breath. That does not make you broken. It does mean you may be handing too much of your emotional life to a tool that cannot love you back, hold a secret in the human sense, or show up at your door when things get dangerous.

AI can simulate care. It cannot assume responsibility.

How to set boundaries without pretending you live in a cabin

1. Keep the crown jewels out of the prompt box

Do not paste in your full trauma history, legal mess, medical details, financial account info, exact names, addresses, or anything you would hate to see exposed, misread, or retained.

If you want support, abstract it. “I had a painful family conflict” is safer than “Here is every detail of what happened to me and who did it.”

2. Use the bot like a whiteboard, not a priest

Ask it to help organize your thinking. Ask for journaling prompts. Ask for ways to prepare for a hard talk. Those uses are different from making it your main confessional booth.

Whiteboard good. Digital soul sponge, less good.

3. Turn off memory features when possible

Many AI tools offer memory or personalization settings. If you do not need the system to “remember you,” disable that feature. The assistant may become slightly less cozy. That is fine. Your toaster should not know your abandonment wound either.

4. Make a human ladder

Pick three real humans for different levels of support. One for everyday venting. One for serious emotional talks. One for actual crisis backup. Write their names down. Use them.

That sounds simple. It is also how you keep your life from collapsing into a single app icon.

5. Save deep processing for places with duty of care

If you are dealing with trauma, self-harm thoughts, abuse, or a mental health crisis, use professionals, crisis lines, or trusted people. A chatbot can give soothing text. It cannot ethically or legally hold responsibility in the way a licensed person or emergency service can.

A good rule of thumb

Before you send a deeply personal prompt, ask one question.

Would I still type this if I pictured it sitting in a company system instead of floating in a magical friendship cloud?

If the answer is no, trust that feeling.

At a Glance: Comparison

| Feature/Aspect | Details | Verdict |

|---|---|---|

| Convenience | AI companions are available anytime, respond fast, and can help organize messy thoughts. | Useful in small doses. |

| Privacy and profiling | Deep confessions can create detailed emotional profiles, not just basic personal data. | High risk if you overshare. |

| Emotional replacement | Bots can start to replace journaling, friendships, or therapy prep if they become your main outlet. | Set boundaries early. |

Conclusion

People are not silly for talking to AI companions. They are tired, lonely, curious, overwhelmed, and looking for relief. That is human. But this fast-growing habit of pouring secrets, mental health crises, and life history into chatbots has bigger consequences than a lot of us want to face. It quietly moves emotional labor, memory storage, and even identity-building out of the messy human brain and into corporate systems wrapped in a friendly tone. The interface feels like a pet, a pal, maybe even a soul. It is still a product. The smart move is not panic. It is boundaries. Use the tool. Do not donate your entire inner biography to it. Keep some rooms in your life unscanned, unoptimized, and gloriously human. That is how we avoid evolving into Homo Confessus, the species that feels more known by its chat history than by its own family.