Homo Deepfaked: How Humans Outsourced Reality To The Glitchy God Of AI Video

You are not being paranoid. The screen really has become a weird little liar. A few years ago, “seeing is believing” was still the default setting for most of us. Now a crisp video of a politician confessing, a celebrity melting down, or your cousin apparently promoting crypto from a hacked account can all be fake, and fake in a way that looks annoyingly real. That messes with your head. Worse, it trains you to doubt actual footage too. So now we have the worst of both worlds. Gullibility and cynicism, holding hands.

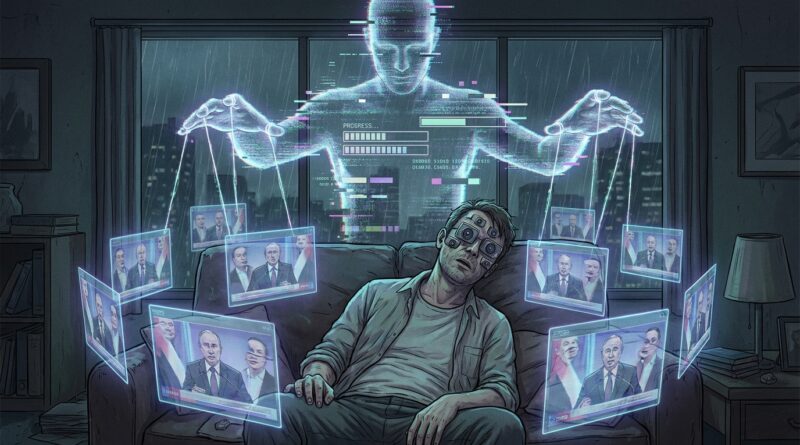

The strange part is that most of us keep scrolling anyway. We know the slot machine is rigged, yet here we are, thumb flicking, little dopamine raccoons digging through the algorithm’s trash for truth. The problem is not that humans are stupid. It’s that our brains were built for reading faces around a campfire, not for spotting Sora-grade simulations rendered in 4K with perfect lighting and almost-right teeth. We outsourced reality to the screen. Then the screen hired a glitchy god.

⚡ In a Hurry? Key Takeaways

- AI video is now good enough that both fake clips and real clips get doubted, which means trust is breaking down fast.

- Before believing or sharing a shocking video, slow down, check the source, and look for confirmation from more than one place.

- You do not need to become a forensic analyst. A few simple habits can help you stay sane and avoid feeding the fake-content machine.

The big problem is not fake video. It’s broken trust.

People often talk about deepfakes like they are just another scam problem. Bad guys trick people. Platforms try to catch up. The end.

But that is too neat. The real issue is bigger and weirder. AI generated video does not just create fake evidence. It poisons the idea of evidence itself.

Once you know a face can be cloned, a voice copied, and “live footage” made from scratch, every clip becomes a small courtroom drama. Is it real. Is it fake. Is it edited. Is it satire. Is it rage bait. Is it a real event with fake captions pasted on top.

By the time you ask those questions, the video has already done its job. It got your attention. It landed the emotional punch. It made your body react before your brain filed the paperwork.

Why our brains are hilariously unprepared for this

We evolved for gossip, not generative cinema

Human beings are amazing pattern detectors. That sounds flattering until you realize it mostly means we are very good at overreacting to social signals.

For most of human history, seeing someone’s face, hearing their voice, and watching their body language were solid clues. Not perfect, but solid enough. If your neighbor looked terrified and said, “Do not go near the river,” it was useful to believe him quickly.

That mental shortcut worked because reality was expensive. To fake a person, you needed actors, makeup, equipment, planning, and a motive bigger than “I was bored on Discord.”

Now reality is cheap to imitate. You can generate a convincing person faster than your grandparents could program a VCR. Our instincts have not caught up. So we keep using Stone Age trust settings in a software-generated puppet show.

The eyes still say yes, even when the brain says maybe

This is the maddening part. Even when you know deepfakes exist, your gut still reacts to visual proof. A crying face still feels like evidence. A shaky phone video still feels authentic. A voice recording still hits with the force of “I heard it myself.”

That is not weakness. That is normal human wiring. Your visual system is fast, emotional, and eager to help. It is also very easy to fool.

The age of “liar’s dividend” has arrived

Deepfakes do not just help people create fake events. They also help guilty people dismiss real ones.

This is sometimes called the liar’s dividend. If anything can be faked, then anyone caught on real video can shrug and say, “Obviously AI.” Suddenly, actual evidence has to climb a mountain just to be treated as plausible.

That is where this gets dark in a very boring, administrative way. Courts, journalists, schools, families, employers, and regular group chats all run on a shared assumption that some records can be trusted. Once that assumption erodes, every dispute gets more exhausting.

And yes, your family WhatsApp thread was already exhausting enough.

Satire aside, this is what 2026 feels like

It feels like standing in a grocery store where half the food is plastic and the other half is real, but nobody labeled anything, and everyone keeps yelling “Do your own research” while eating display fruit.

That is why people now swing between two bad coping styles.

Mode 1: Believe everything that fits your mood

If a clip confirms what you already suspect, it glides right in. “See. I knew it.” This is how outrage farms make money.

Mode 2: Believe nothing at all

This feels smarter, but it has its own cost. If every video is dismissed as potentially fake, then real reporting, real witness footage, and real documentation all lose power too.

That is not skepticism. It is learned helplessness with better branding.

How to stay sane when reality becomes a user setting

You do not need a lab coat or a cybercrime unit. You need a few habits that interrupt the emotional hit.

1. Treat viral video like a tip, not proof

If a clip is shocking, useful, or perfectly crafted to make you furious, do not treat it as established fact. Treat it as a lead. Something to verify.

Ask basic questions. Who posted it first. Is there an original upload. Are credible outlets reporting the same event. Is there context missing before or after the clip.

2. Slow the share reflex

The fake-content economy runs on speed. If you wait ten minutes, or even two, you are already harder to manipulate than a huge chunk of the internet.

Most misinformation wins because people share first and think later. Do the opposite. Feel slightly old-fashioned about it. That is a feature, not a bug.

3. Look for source chains, not just visual polish

A polished image means almost nothing now. High quality used to imply effort. Effort used to imply legitimacy. That chain is broken.

Instead, follow the source trail. Did a reputable reporter, local station, official account, or known witness post it. Can you trace where it came from. If the answer is “some account named TruthNukePatriot420 posted a vertical crop with dramatic captions,” maybe do not rebuild your worldview around it.

4. Check whether the platform is rewarding emotion over truth

This one sounds obvious, but we forget it constantly. Social platforms are not truth machines. They are attention machines. If a fake clip gets comments, duets, stitches, angry quote-posts, and reaction videos, the platform does not care that your civilization is unraveling a bit. It cares that engagement is up.

So when a clip feels engineered to hit panic, disgust, or tribal joy, assume the system is boosting it for that reason.

5. Keep a small list of places you trust

You cannot personally verify every video on earth. Nobody can. The practical move is to build a short list of reliable sources and use them as your anchor points.

Not perfect sources. Reliable ones. There is a difference.

6. Learn to say “I don’t know yet”

This may be the most useful skill of the next few years. Not certainty. Not hot takes. Not instant debunks. Just a calm, boring, emotionally mature sentence.

I do not know yet.

That sentence will save you from a lot of nonsense.

What deepfakes are really changing about being human

For a long time, a witness was a person who saw something. Now a witness is becoming a person who can document where a file came from, when it was captured, whether it was edited, and how it was verified by others.

That is a huge cultural shift. We are moving from “I saw it with my own eyes” to “I saw it, and now I need metadata, corroboration, and maybe a digital signature.”

That sounds ridiculous until you remember we are trying to function in a world where a laptop can generate a realistic street protest, a celebrity interview, or your uncle crying in a police station, all before lunch.

So yes, the old idea of visual truth is cracking. But that does not mean truth is gone. It means trust needs better plumbing.

The good news, if you can call it that

Humans do adapt. We adapted to photo editing. We adapted, sort of, to spam email. We adapted to the fact that caller ID means absolutely nothing when a scammer wants your tax refund.

We will adapt to AI video too. Slowly. Messily. With several embarrassing years in between.

The trick is not pretending the tech is harmless, and not collapsing into total nihilism either. The trick is building a more cautious culture around evidence. Less “I saw a clip.” More “I checked where it came from.”

At a Glance: Comparison

| Feature/Aspect | Details | Verdict |

|---|---|---|

| What AI video changes | It makes fake footage easier to create and real footage easier to deny. | Trust takes the biggest hit. |

| Best everyday defense | Pause before sharing, check the original source, and look for independent confirmation. | Simple habits beat panic. |

| Mindset to avoid | Blind belief and total cynicism both make you easier to manipulate. | Aim for skeptical, not hopeless. |

Conclusion

Right now AI generated video is good enough that people doubt both real and fake clips, which means trust is collapsing faster than the tech headlines can keep up. That is the real story. Not just “wow, the video looks real,” but “uh-oh, now reality itself feels negotiable.” The useful response is not panic, and it is not smug detachment. It is a few grounded habits. Slow down. Check the source. Look for confirmation. Get comfortable saying “I’m not sure yet.” Our brains were built for savanna gossip, not machine-made humans delivering perfect lies in cinematic lighting. So yes, laugh at our collective gullibility a little. It helps. But also take the problem seriously. Staying sane in the deepfake era may be less about becoming a detective and more about refusing to let the screen do all your thinking for you.